Abstract

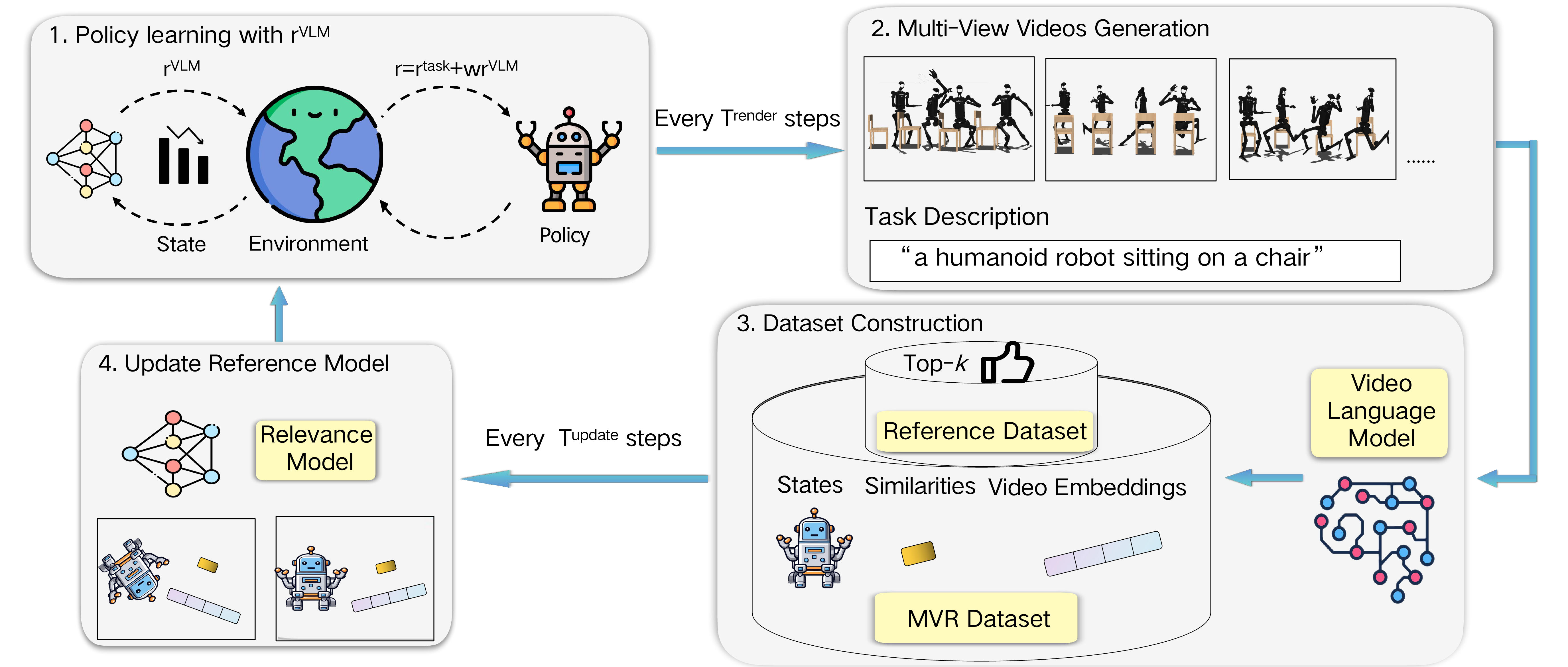

Reward design is of great importance for solving complex tasks with reinforcement learning. Recent studies have explored using image-text similarity produced by vision-language models (VLMs) to augment rewards of a task with visual feedback. A common practice linearly adds VLM scores to task or success rewards without explicit shaping, potentially altering the optimal policy. Moreover, such approaches, often relying on single static images, struggle with tasks whose desired behavior involves complex, dynamic motions spanning multiple visually different states. Furthermore, single viewpoints can occlude critical aspects of an agent's behavior. To address these issues, this paper presents Multi-View Video Reward Shaping (MVR), a framework that models the relevance of states regarding the target task using videos captured from multiple viewpoints. MVR leverages video-text similarity from a frozen pre-trained VLM to learn a state relevance function that mitigates the bias towards specific static poses inherent in image-based methods. Additionally, we introduce a state-dependent reward shaping formulation that integrates task-specific rewards and VLM-based guidance, automatically reducing the influence of VLM guidance once the desired motion pattern is achieved. We confirm the efficacy of the proposed framework with extensive experiments on challenging humanoid locomotion tasks from HumanoidBench and manipulation tasks from MetaWorld, verifying the design choices through ablation studies.

Overview

Method

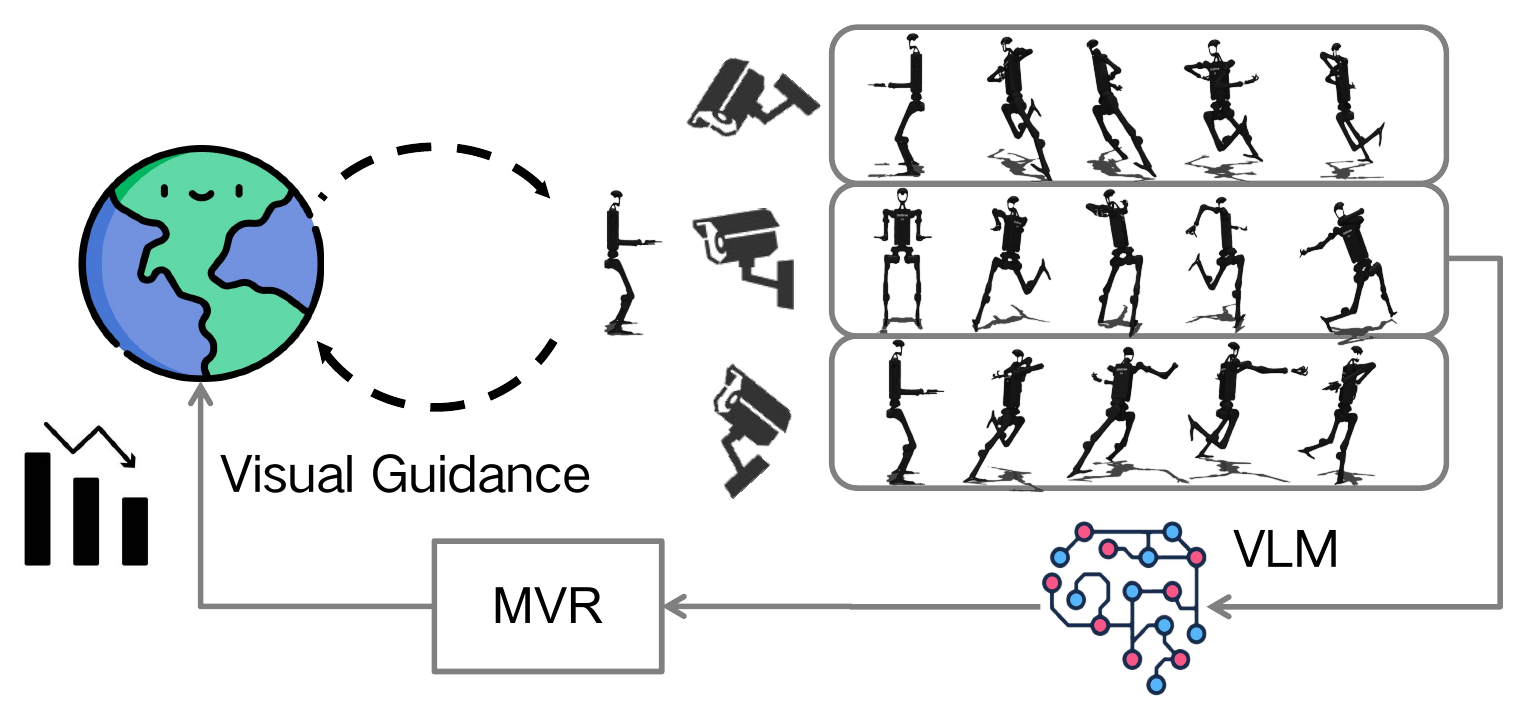

MVR periodically renders recent trajectories into multi-view videos, queries a frozen VLM for similarity scores and embeddings, and updates a state-space relevance function. The learned relevance defines a shaped reward for online off-policy RL.

Instead of regressing raw similarity scores in state space, MVR preserves the ordering induced by video-text comparisons via a Bradley–Terry model, and regularizes representations for stability.

Shaping guides the policy towards relevant states early, then fades as the policy becomes “indistinguishable” from high-scoring reference behaviors, reducing persistent conflict with task rewards.

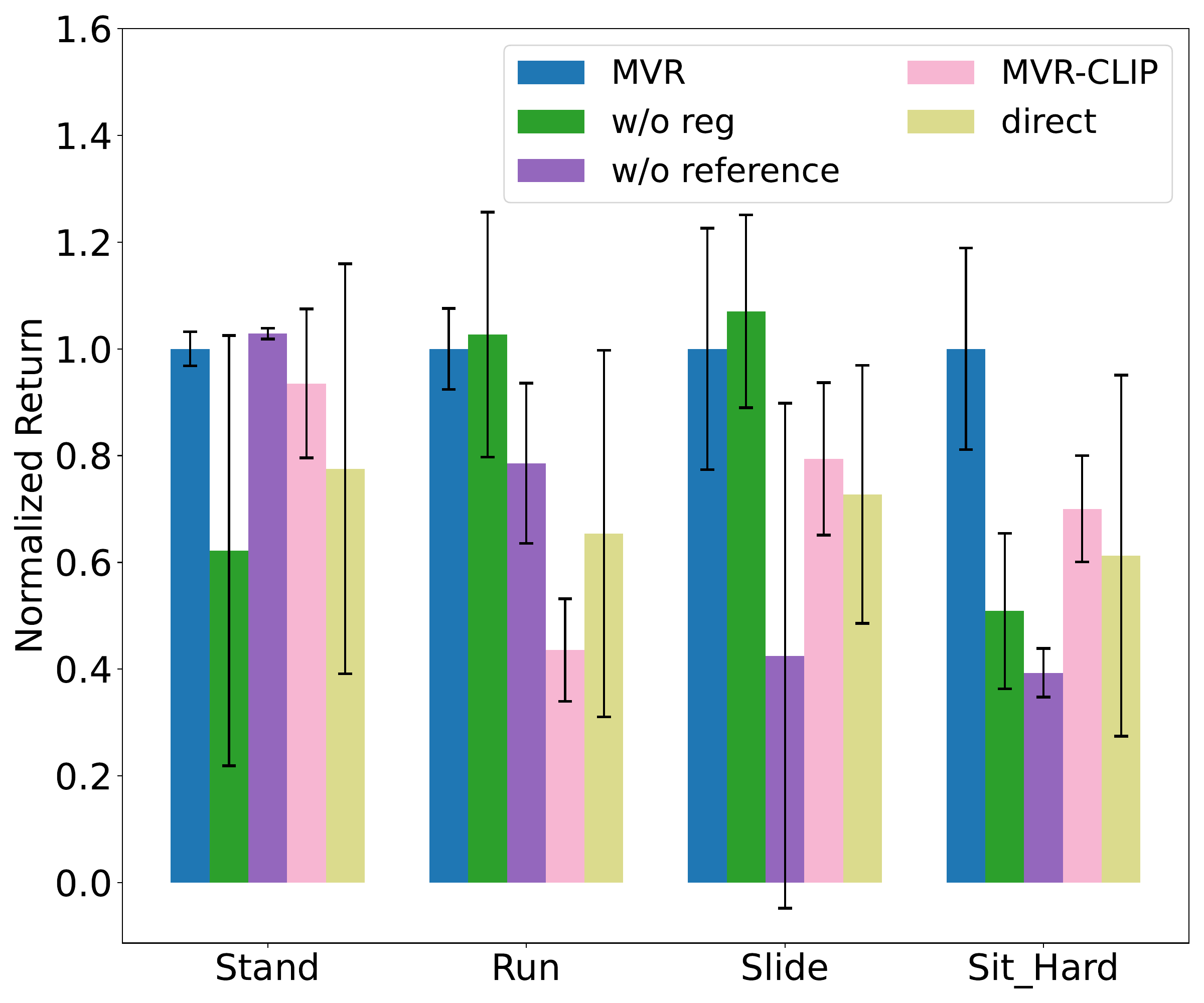

Results

We evaluate MVR on 19 robotics tasks from HumanoidBench (humanoid locomotion) and MetaWorld (manipulation). The figures below are from the paper.

Videos

Qualitative comparison on HumanoidBench. MVR succeeds on Sit_Hard, while TQC fails under the same setting.

BibTeX

@inproceedings{luo2026mvr,

title = {MVR: Multi-view Video Reward Shaping for Reinforcement Learning},

author = {Luo, Lirui and Zhang, Guoxi and Xu, Hongming and Yang, Yaodong and Fang, Cong and Li, Qing},

booktitle = {International Conference on Learning Representations},

year = {2026}

}

Contact

Corresponding authors: fangcong@pku.edu.cn and dylan.liqing@gmail.com.